- Skymizer says giant AI models no longer need hyperscale GPU infrastructure

- Old 28nm chips are suddenly powering massive language models at surprisingly low power

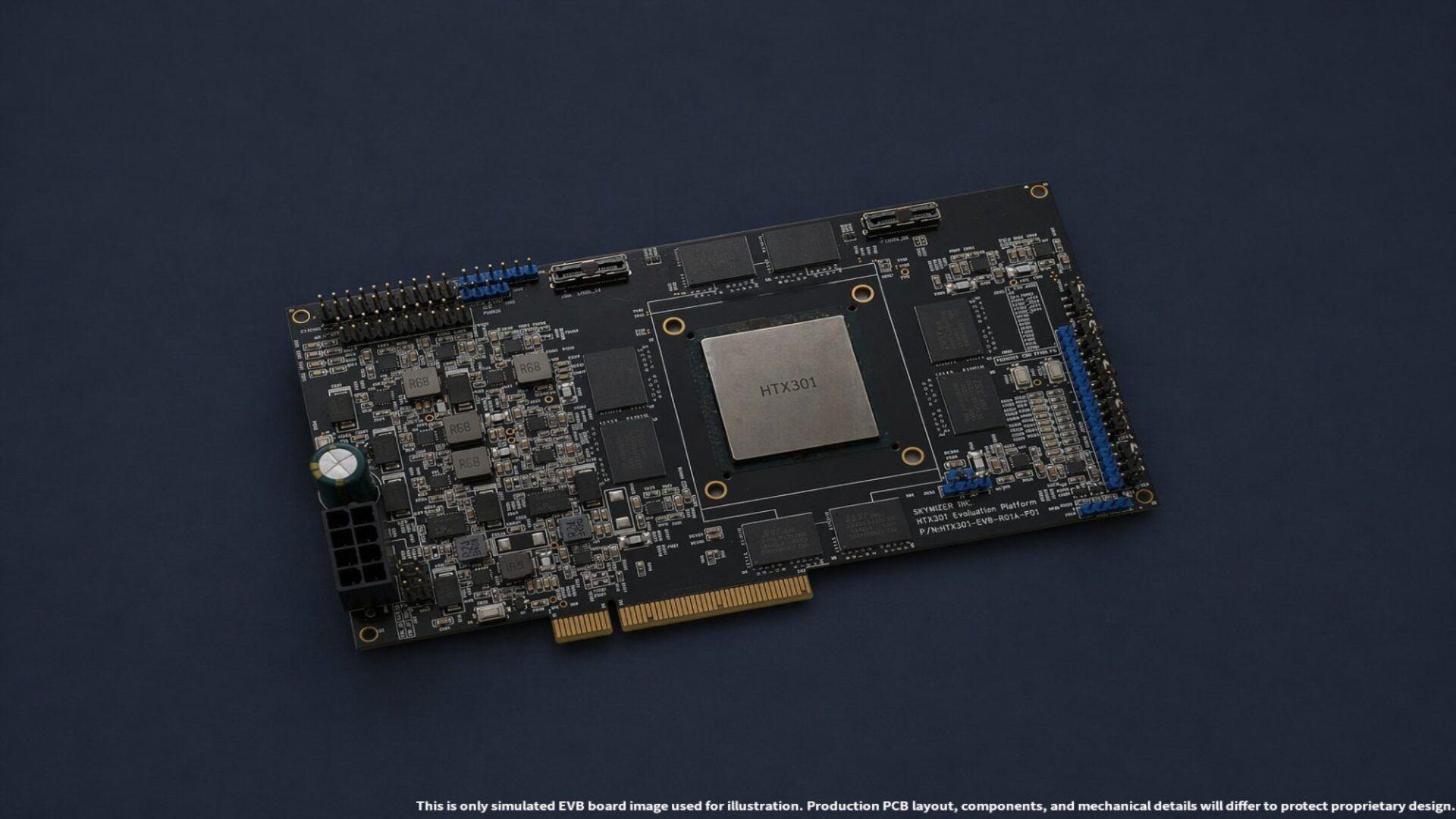

- The HTX301 integrates 384 GB of memory into a single PCIe accelerator card

A Taiwanese company called Skymizer has unveiled a PCIe AI accelerator that challenges AMD and Nvidia using surprisingly old technology.

The HTX301 card can run language models with up to 700 billion parameters on a single device while consuming only 240 watts of power.

The card achieves this feat by using older 28-nanometer chips and standard LPDDR4 and LPDDR5 memory instead of expensive HBM or GDDR solutions.

Old tech chips compete with modern AI accelerators

Skymizer claims its card delivers 30 tokens per second with just 0.5 TOPS at 100 GB of bandwidth per second.

The HTX301 is built on Skymizer’s HyperThought platform, which includes next-generation LPU IP designed specifically for large language model workloads.

Each PCIe card contains six HTX301 chips working together and the card offers up to 384 GB of total memory capacity.

The design uses efficient compression techniques for weights and KV cache, outperforming open source lama.cpp by 9-17.8%.

Its power consumption is less than half of what leading PCIe AI accelerators from AMD and NVIDIA typically require.

The board supports agentic AI for domain-specific coding, automation, and workflows without the need for hyperscale GPU clusters.

Running large language models in the cloud leads to privacy concerns and unpredictable costs that many organizations find unacceptable.

Upgrading on-premises infrastructure to support massive GPU accelerator platforms often requires a costly overhaul of the data center’s power and cooling systems.

Skymizer’s HTX301 gives businesses a third option that fits into standard air-cooled servers without any infrastructure changes.

Company claims era of need for hyperscale GPU clusters for ultra-large LLMs it’s over with their new technology.

The PCIe card form factor allows businesses to scale on-premises AI inference while maintaining data sovereignty and predictable infrastructure costs.

Skymizer HTX301 awaits real-world testing

Skymizer will demonstrate the HTX301 at Computex this year, allowing independent verification of its performance.

This chip’s specs look impressive on paper, but real-world testing will determine whether the card actually delivers 240 tokens per second on Llama2 7B workloads.

AMD recently launched its Instinct MI350P PCIe card with 144GB of HBM3E memory and up to 4,600 peak TFLOPS with MXFP4 accuracy, but it consumes considerably more power than Skymizer’s offering.

Nvidia’s RTX PRO 6000 Blackwell draws around 600 watts, more than double what the Skymizer card needs for comparable inference tasks.

If the HTX301 performed as advertised, it could significantly lower the barrier to entry for on-premises AI infrastructure.

Failure would place Skymizer among the many startups that could not deliver on their promises.

Via Wccftech

Follow TechRadar on Google News And add us as your favorite source to get our news, reviews and expert opinions in your feeds.