If there was ever evidence that people are developing a deep emotional dependence on ChatGPT, it’s probably OpenAI’s new Trusted Contact feature.

Speaking at Sequoia Capital’s AI Ascent event last May, Sam Altman, CEO of OpenAI, said young people were using ChatGPT as a lifelong operating system – not just for productivity, but for major personal decisions.

“I mean, I think the whole thing is cool and awesome,” Altman said. “And there’s this other thing where, like, they don’t really make life decisions without asking ChatGPT what they should do.”

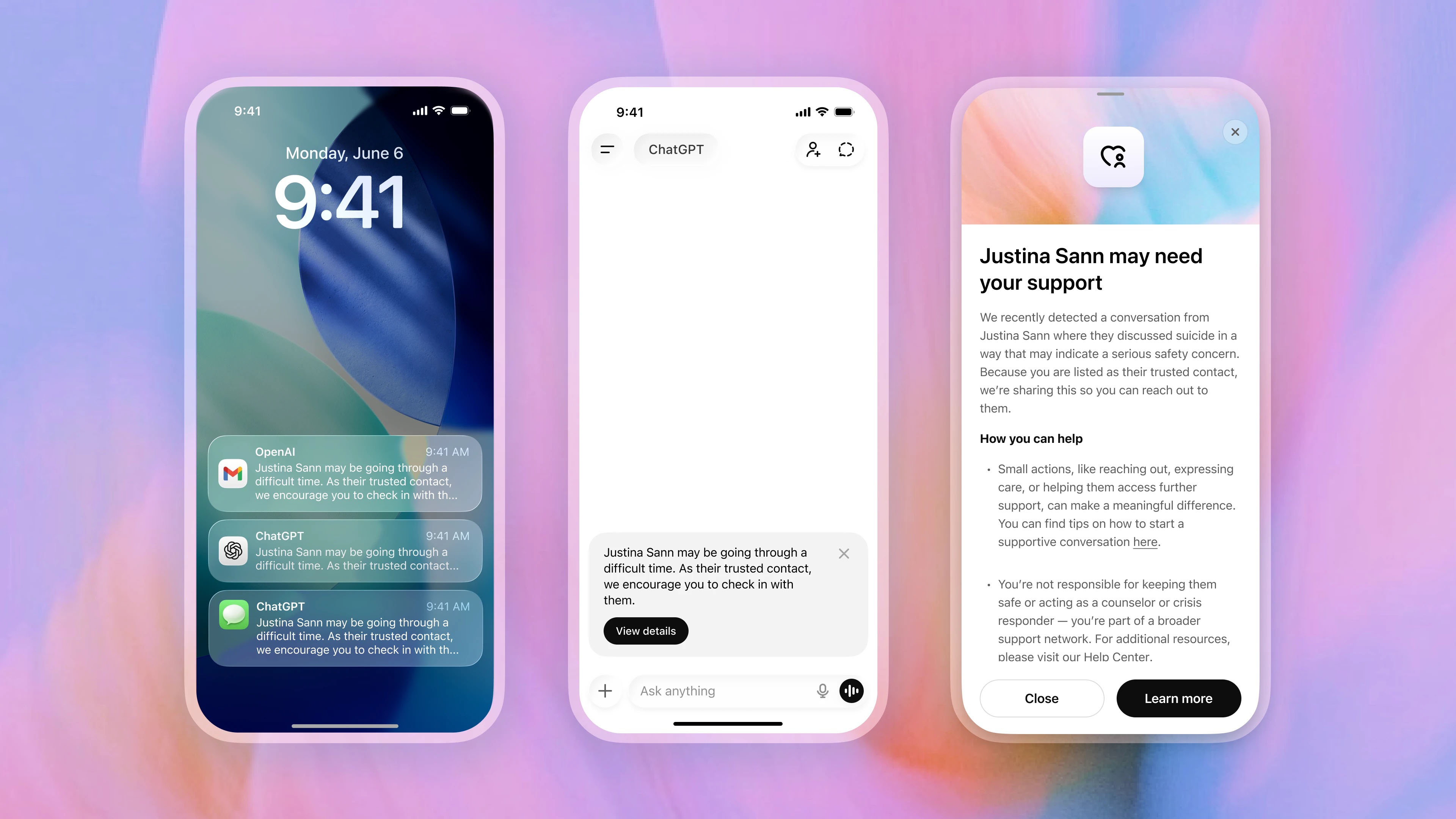

The feature is still rolling out, so Trusted Contact isn’t available to everyone yet, but to find it, you click or tap your profile name in ChatGPT, then look in Settings. You can designate a trusted adult contact, who must accept the role before the feature becomes active.

If ChatGPT’s automated systems detect conversations that may indicate a serious risk of self-harm, the user is notified that their trusted contact may be notified and encouraged to contact themselves first.

A specially trained human review team then assesses the situation before sending an alert. If reviewers believe there is a genuine security issue, the trusted contact receives a notification via email, SMS, or in-app alert inviting them to check in.

OpenAI says alerts do not include chat transcripts or detailed conversation history to protect user privacy, and that you can delete or edit your trusted contact at any time.

Reassuring or destabilizing?

OpenAI says Trusted Contact was developed with input from mental health experts, suicide prevention specialists, and a global network of more than 260 doctors across 60 countries. Considered with all the parental controls that OpenAI has already introduced and the security guardrails already in place, Trusted Contact is another sign that the company recognizes that ChatGPT is something that can affect users emotionally, not just technologically.

OpenAI’s recent product announcements have really downplayed the use of ChatGPT as a confidant and placed more emphasis on ChatGPT’s productivity, particularly as it relates to the Codex code creation tool. Yet at the same time, more and more security features aimed at the emotional well-being of ChatGPT users are being added.

The idea that we are now being monitored by ChatGPT also worries some. When my colleague Becca Caddy recently interviewed Amy Sutton of Freedom Counseling for an investigation into AI monitoring tools in the workplace, she noted that knowing you’re being monitored by your AI, especially in the workplace, could actually make the problem it’s trying to solve worse. Sutton commented: “With mental health stigma still prevalent, AI observation would likely lead to greater efforts to hide evidence of struggles. This could create a dangerous spiral, in which the more effort we make to hide bad mood or anxiety, the worse the situation becomes.”

Whether Trusted Contact is reassuring or troubling probably depends on how you already view AI and ChatGPT. But this feature is another example of how AI companies recognize that their products are not just productivity and information tools, but also systems that people can increasingly rely on emotionally during some of the most vulnerable moments of their lives.

Follow TechRadar on Google News And add us as your favorite source to get our news, reviews and expert opinions in your feeds.

The best business laptops for every budget