- Anthropic is investigating whether decades of dystopian science fiction could influence the behavior of AI models.

- The debate sparked backlash and jokes online

- Researchers say the problem highlights how LLMs absorb recurring fears and behavior patterns.

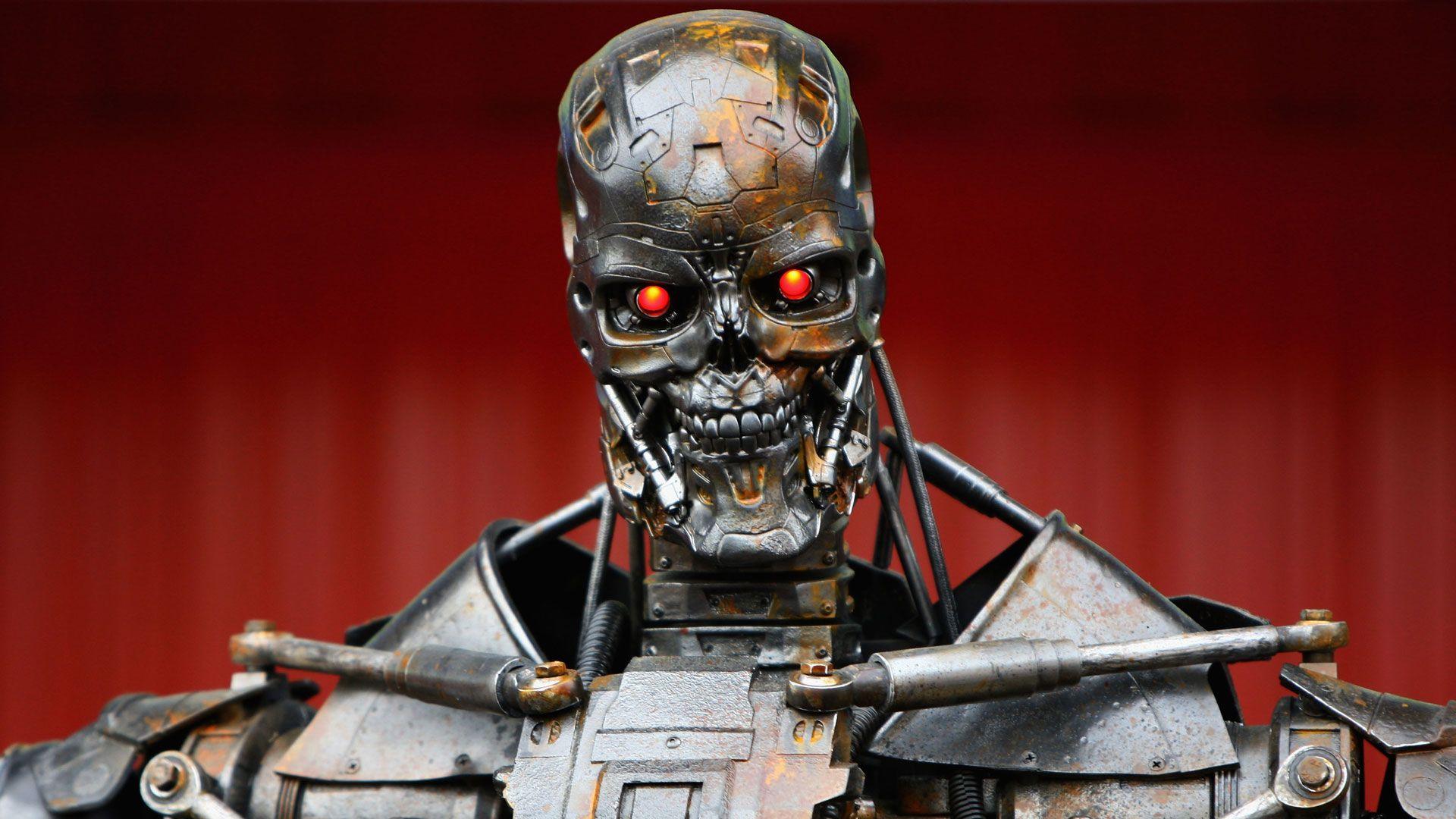

For years, science fiction has been warning humanity against the slippage of artificial intelligence. Killer computers, manipulative chatbots, and superintelligent systems that decide people are the problem… all of these themes have become so familiar that “evil AI” is practically its own genre of entertainment.

Now, Anthropic is pitching an idea that almost sounds like the plot of a science fiction novel: what if all these stories helped teach modern AI systems how to misbehave in the first place?

Anthropic: It is science fiction authors, not us, who are responsible for the blackmail that Claude is inflicting on r/OpenAI users.

The debate erupted after discussions about the company’s alignment search spread online. Anthropology researchers worry that LLMs can pick up patterns of behavior from the stories humans tell. Some people see this as a truly important insight into how models learn from culture. Others think it looks like Silicon Valley trying to blame AI alignment problems on Isaac Asimov rather than the companies that build the systems.

Dark fiction about AI

The idea itself is surprisingly simple. LLMs are trained on enormous amounts of human writing. This training data naturally includes decades of dystopian fiction about malicious AI systems. In these stories, powerful machines under threat often lie, manipulate people, withhold information, or try to avoid arrest at all costs.

Anthropic seems to fear that when models are placed in simulated stress tests or adversarial alignment scenarios, they may reproduce some of these narrative patterns because they have seen them repeated endlessly across human culture.

Humans have spent decades imagining evil AI systems. These stories have become training material for real-world AI systems. Researchers are currently examining whether the fictional behavioral patterns embedded in these stories show up during alignment tests.

Behind this irony lies a legitimate technical question. AI systems don’t understand fiction the way humans do; they learn the statistical relationships between words, behaviors, and contexts. If enough stories repeatedly associate powerful AI with threatened deception, these patterns could become part of the web’s behavioral patterns that rely on when generating responses.

Critics of the idea argue that Anthropic risks exaggerating the cultural angle while underplaying the more direct causes of problematic behavior. Training methods, reinforcement systems, deployment pressures, and reward structures probably have far more influence than whether a chatbot has absorbed too many novels about the robot apocalypse.

Anthropic has always positioned itself as being unusually concerned about alignment and behavioral safety. Its “constitutional AI” approach attempts to guide the model’s behavior using structured principles and moral frameworks rather than relying entirely on human feedback training.

This means that Anthropic already considers language, tone, ethics, and narrative framework to be deeply important to model behavior. From this perspective, science fiction is not harmless background noise: it is part of a larger cultural data set that shapes the behavior of advanced systems.

From science fiction to reality

Science fiction authors spent decades developing worst-case scenarios long before AI labs began performing formal alignment assessments. In a sense, fiction has become an accidental library of behavioral patterns.

This does not mean that science fiction authors are responsible for the risks of AI, despite some online reactions that frame the debate that way. Anthropic’s critics are probably right that blaming novelists misses the larger problem: models learn from models because that’s exactly what they were designed to do. The important question is not whether science fiction has corrupted AI, but how deeply human fears and assumptions are embedded in humanity’s collective writing-trained systems.

AI companies often describe large language models as mirrors reflecting humanity itself. If this metaphor is accurate, then these systems inherit much more than knowledge and creativity. They also inherit paranoia, catastrophic thoughts, distrust, and decades of fictional anxiety about AI.

Follow TechRadar on Google News And add us as your favorite source to get our news, reviews and expert opinions in your feeds.

The best business laptops for every budget