- Installing a single gigawatt AI facility costs nearly $80 billion

- Planned AI capacity across the sector could total 100 GW

- High-end GPU hardware should be replaced every five years without extension

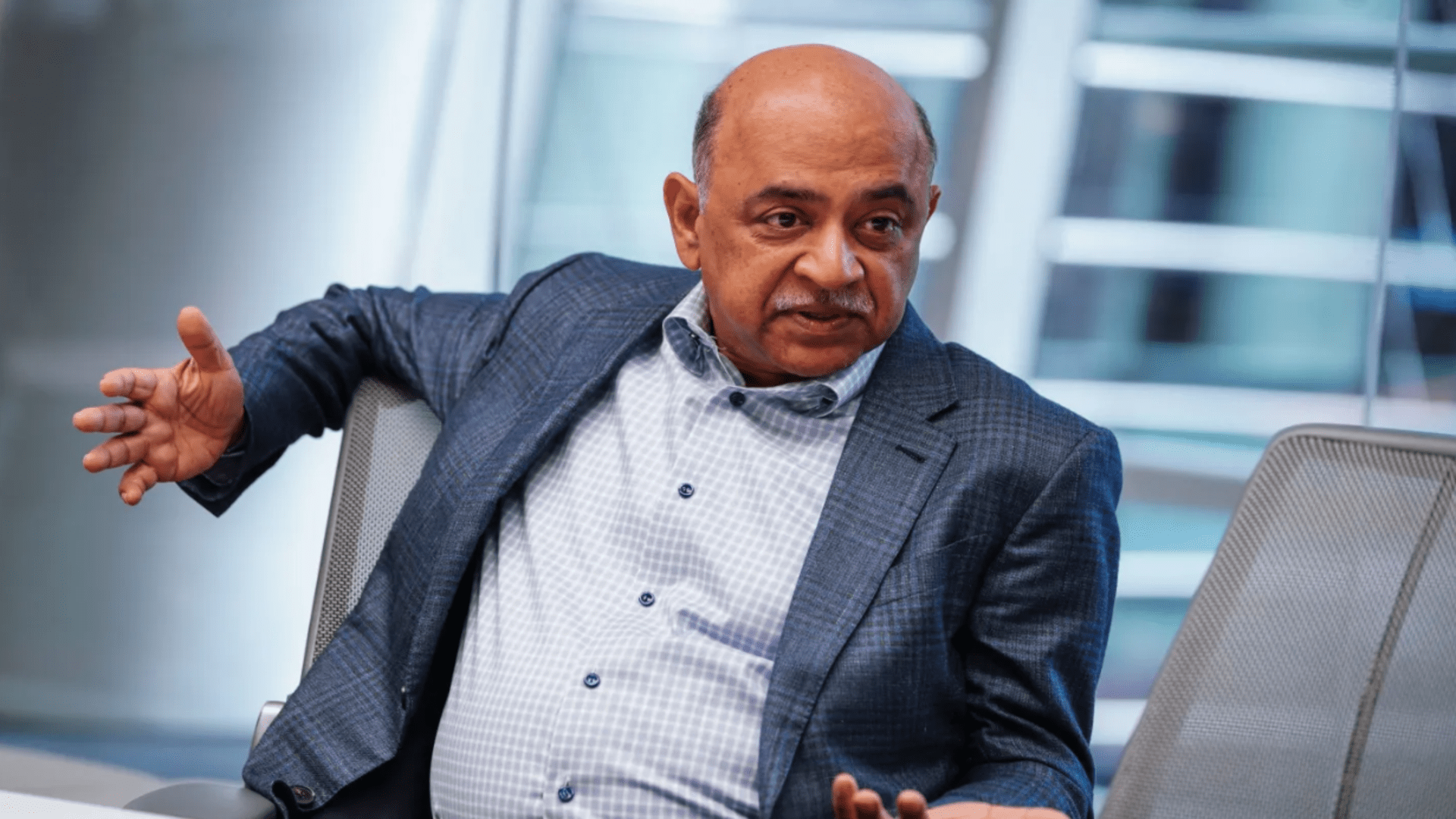

IBM Chief Executive Arvind Krishna questions whether the current pace and scale of AI data center expansion can ever remain financially viable under current assumptions.

He estimates that equipping a single site with 1 GW of hardware is now approaching $80 billion.

With public and private plans indicating nearly 100 GW of future capacity earmarked for advanced model training, the implied financial exposure amounts to $8 trillion.

Economic Burden of Next-Generation AI Sites

Krishna directly connects this trajectory to the refresh cycle that governs today’s accelerator fleets.

Most of the high-end GPU hardware deployed in these centers depreciates over about five years.

At the end of this window, operators do not extend the equipment but replace it entirely. The result is not a one-time loss of capital, but a recurring obligation that compounds over time.

CPU resources are also part of these deployments, but they are no longer the focus of spending decisions.

The balance has shifted toward specialized accelerators that deliver massive parallel workloads at a rate unmatched by general-purpose processors.

This shift has significantly changed the definition of scale for modern AI facilities and pushed capital requirements beyond what traditional enterprise data centers once required.

Krishna argues that depreciation is the factor most often misunderstood by market participants.

The pace of architectural change means that performance increases arrive faster than financial depreciation can easily be absorbed.

Equipment that is still functional becomes economically obsolete well before the end of its physical lifespan.

Investors such as Michael Burry are raising similar doubts about the ability of cloud giants to continue to extend the lifespan of their assets as model sizes and training demands increase.

From a financial perspective, the burden no longer lies in energy consumption or land acquisition, but in the forced replacement of increasingly expensive hardware batteries.

In desktop environments, similar refresh dynamics already exist, but the scale is fundamentally different within hyperscale sites.

Krishna calculates that to cover the cost of capital for these multi-gigawatt campuses would require hundreds of billions of dollars in annual profits, just to remain neutral.

This requirement is based on current hardware economics rather than speculative long-term efficiency gains.

These projections come as major tech companies announce ever-larger AI campuses, measured not in megawatts but in tens of gigawatts.

Some of these proposals already compete with the electricity demand of entire countries, raising parallel concerns about grid capacity and long-term energy pricing.

Krishna believes that there is almost no chance that current LLMs will achieve general intelligence on the next generation of hardware without a fundamental change in knowledge integration.

This assessment presents the wave of investment as being motivated more by competitive pressure than by a validated technological inevitability.

Interpretation is difficult to avoid. The development assumes that future revenues will increase to match unprecedented spending.

This is happening even as depreciation cycles shorten and power limits tighten in several regions.

The risk is that financial expectations are ahead of the economic mechanisms necessary to maintain them throughout the life cycle of these assets.

Via Tom’s material

Follow TechRadar on Google News And add us as your favorite source to get our news, reviews and expert opinions in your feeds. Make sure to click the Follow button!

And of course you can too follow TechRadar on TikTok for news, reviews, unboxings in video form and receive regular updates from us on WhatsApp Also.