- Hugging Face launched Huggingsnap, an iOS application that can analyze and describe what your iPhone’s camera sees.

- The application works offline, without sending data to the cloud.

- Huggingsnap is imperfect but demonstrates what can be done entirely on the peripheral.

Giving view to AI becomes more and more common, because tools like Chatgpt, Microsoft Copilot and Google Gemini Roll Out for their AI tools. The embraced face has just left a turn at the idea with a new iOS application called Huggingsnap which offers to look at the world via the camera of your iPhone and to describe what it sees without ever connecting to the cloud.

Think about it as having a personal guide who knows how to keep your mouth closed. Huggingsnap works completely offline using the internal vision model of Hugging Face, Smolvlm2, to allow instant recognition of objects, stage descriptions, text reading and general observations on your environment without your data being sent to the Internet.

This offline capacity makes Huggingsnap particularly useful in situations where connectivity is uneven. If you hike in the desert, traveling abroad without a reliable internet, or simply in one of these alleys of the grocery store where the cellular service mysteriously disappears, then having the capacity of your phone is a real bargain. In addition, the application claims to be super effective, which means that it will not drain your battery as do the AI models based on the cloud.

Huggingsnap looks at my world

I decided to give a whirlwind to the application. First of all, I pointed it to my laptop screen while my browser was on my Techradar biography. At the beginning, the application did a solid job by transcribing the text and explaining what he saw. However, he went from reality when he saw the big titles and other details around my biography. Huggingsnap thought that references to new computer chips in a title were an indicator of what feeds my laptop, and seemed to think that some of the names of the titles indicated other people who use my laptop.

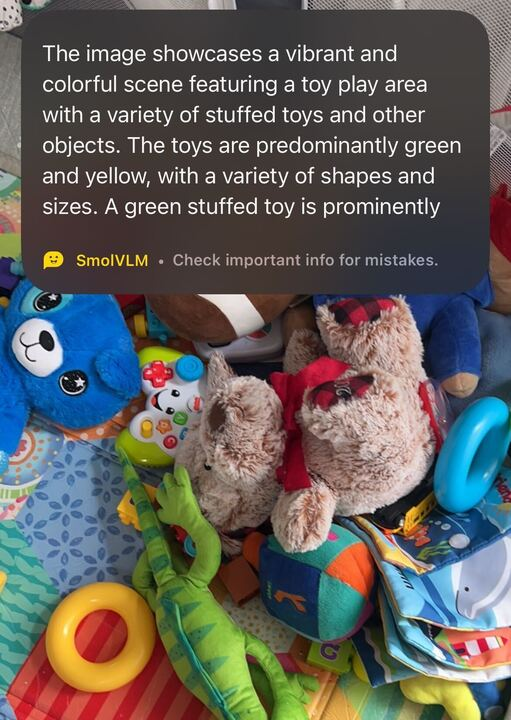

I then pointed out my camera on the gaming park of my son full of toys that I had not yet cleaned. Again, AI did an excellent job with the large features by describing the playground and the toys inside. He obtained the correct colors and even the correct textures when identifying toys in stuffy against the blocks. He also fell into certain details. For example, he called a bear a dog and seemed to think that a stacking ring was a bullet. Overall, I would call the AI of Huggingsnap, ideal for describing a scene to a friend but not good enough for a police report.

See the future

The approach on Huggingsnap devices stands out from the integrated capabilities of your iPhone. Although the device can identify the plants, copy text from images and tell you if this spider on your wall is the type that should make you move, it must almost always send information to the cloud.

Huggingsnap is notable in a world where most applications want to follow everything that is moving from your blood group. That said, Apple invests strongly in a disc AI for its future iPhones. But for now, if you want privacy with your AI vision, Huggingsnap could be perfect for you.