- ZAM stacks nine functional memory layers vertically inside each compact module

- Each ZAM memory layer would contain exactly 1.125 GB of DRAM capacity

- Estimated ZAM bandwidth numbers are now getting close to Nvidia HBM4 performance territory

The architecture of computer memory will undergo a significant structural transformation in the years to come.

A new design called Zero-Angle Memory (ZAM) stacks the chips vertically rather than spreading them across a flat surface, a change that could increase data transfer speeds while reducing power consumption.

Intel has thrown its weight behind this technology as a potential replacement for existing HBM memory.

Inside the nine-layer ZAM memory design

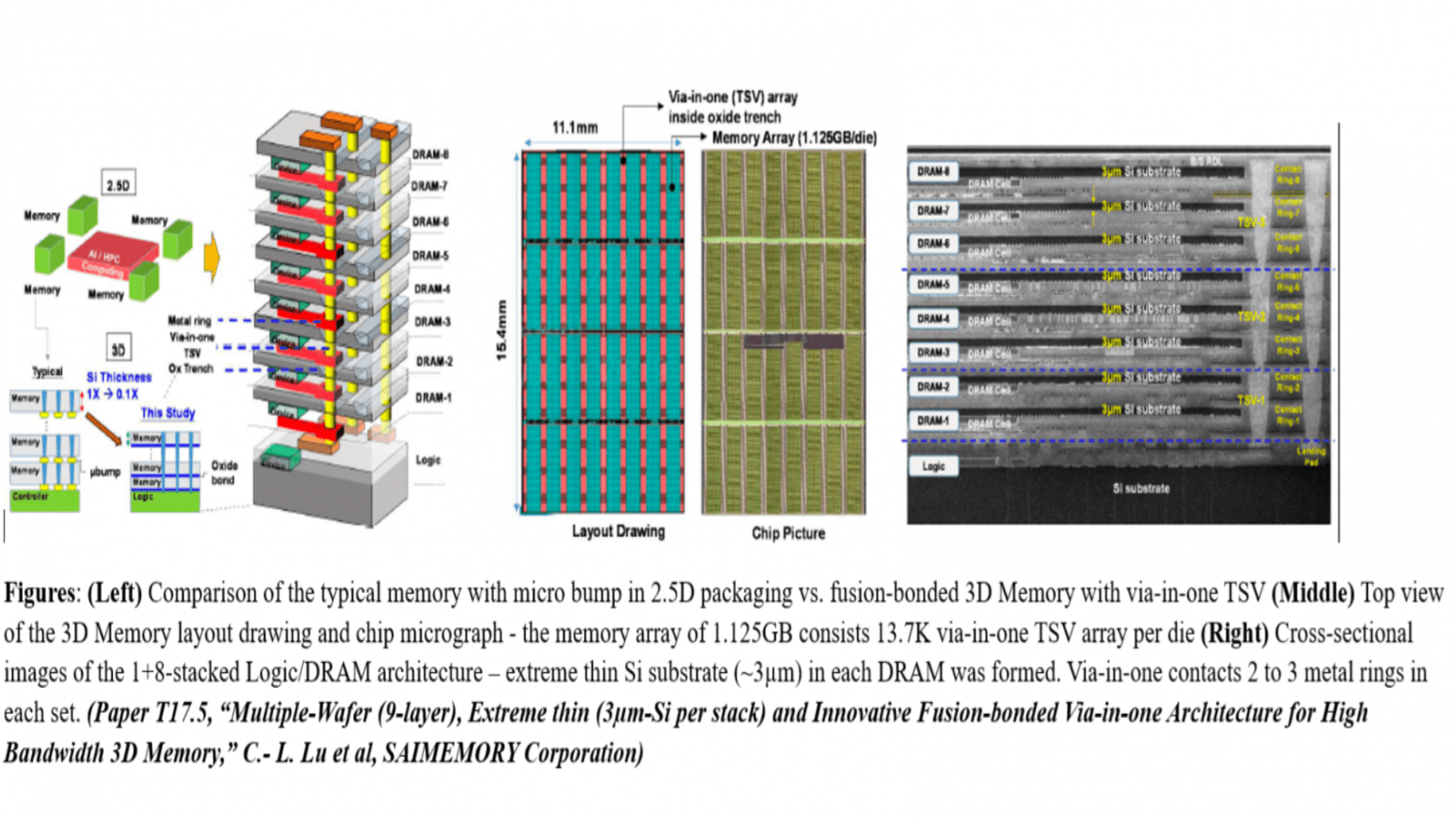

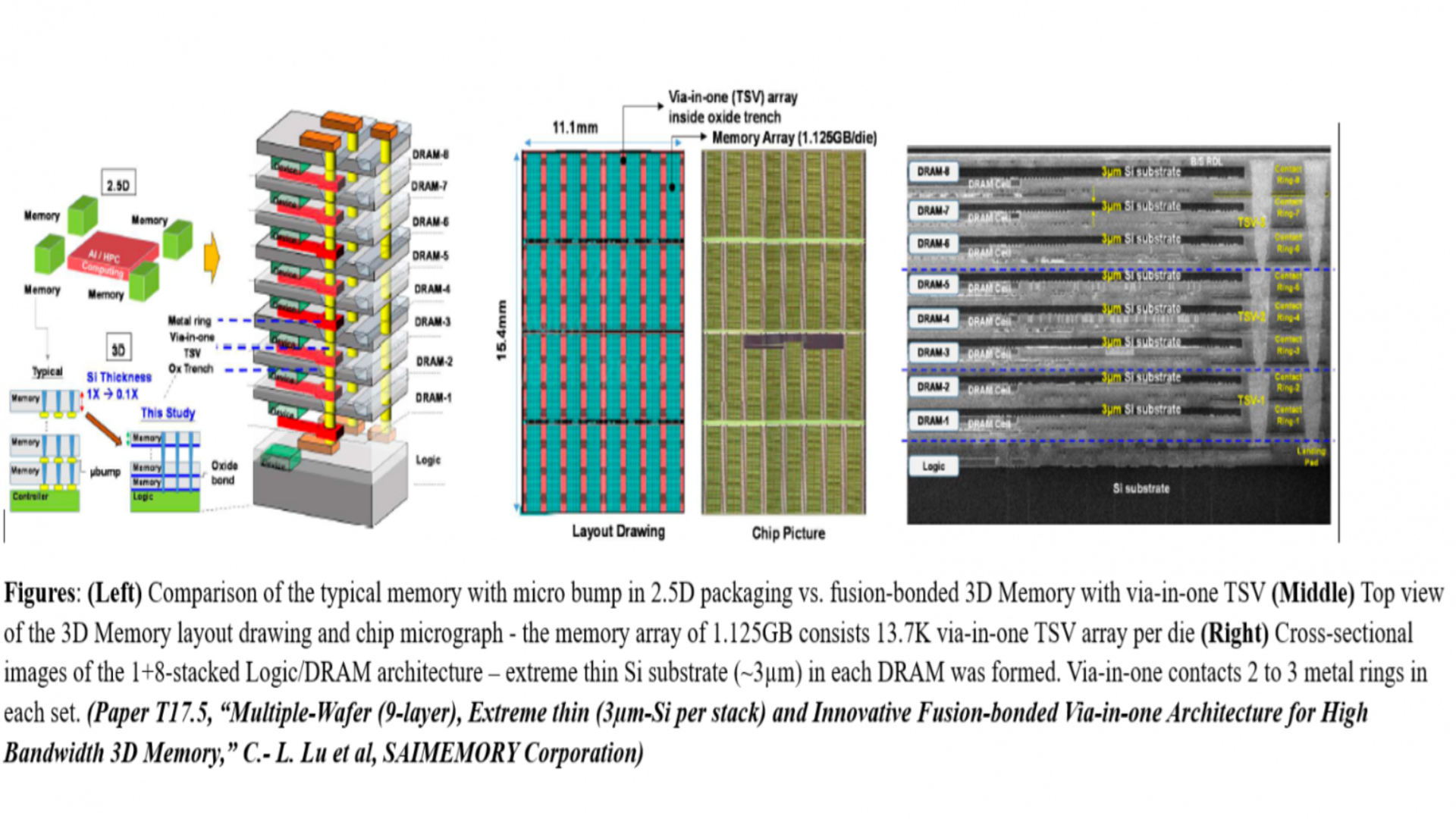

Technical diagrams from an upcoming VLSI conference paper have now revealed the internal details of this memory design.

Eight distinct DRAM storage layers sit under a single control layer within each ZAM module built by the consortium.

This arrangement gives each module a total of nine functional layers stacked vertically on top of each other.

Images in the conference paper show how each of the eight DRAM layers contains exactly 1.125 GB of storage capacity.

The basic calculation therefore provides approximately 9 GB of total memory per ZAM module before any overhead deductions.

Three through-silicon vias (TSVs) pass through the entire vertical stack to electrically connect each layer from top to bottom.

Intel developed the fusion method that creates these TSV connections with extreme precision and reliability.

Each DRAM layer is separated from its neighbor by a silicon substrate just 3 microns thick.

These TSVs attach to two or three metal rings on each layer for stable electrical flow.

Bandwidth estimates derived from prior claims now place ZAM close to the HBM4 performance figures of Nvidia’s Vera Rubin platform.

ZAM targets HBM4 class bandwidth

A Japanese company called Saimemory Corporation is leading the efforts to commercialize this Intel-backed technology.

Saimemory operates as a wholly owned subsidiary of SoftBank and has yet to release official data pricing for this new memory design.

Previous statements from the company suggested two to three times speedup over current HBM3 memory standards.

HBM3 currently offers 819 GB/s (or 6.4 Gbps) of bandwidth in its standard configuration. So a three-fold increase from this benchmark would give ZAM around 2.5 TB/s of total throughput for AI processors.

Nvidia’s Vera Rubin AI platform is said to rely on HBM4 for its highest bandwidth configurations available.

This performance parity places Intel’s HBM-killer memory technology in direct competition with Nvidia’s preferred memory standard.

Currently, no working prototypes of ZAM have yet been demonstrated to independent evaluators or third-party testing laboratories worldwide.

Fabricating eight bonded layers without introducing defects remains a difficult and unproven industrial challenge for this consortium.

HBM4 already benefits from Nvidia’s established production roadmap and existing global supply chains from multiple suppliers.

A memory standard with superior technical specifications often fails without broad ecosystem adoption and industry support over time.

The June VLSI conference presentation will determine whether Intel’s claims about the HBM killer extend beyond paper diagrams to physical reality.

Via FIL HPC

Follow TechRadar on Google News And add us as your favorite source to get our news, reviews and expert opinions in your feeds.