- Intel and Google signed a multi-year agreement to keep Xeon in cloud infrastructure

- Google Cloud C4 and N4 instances already run on Xeon 6 processors

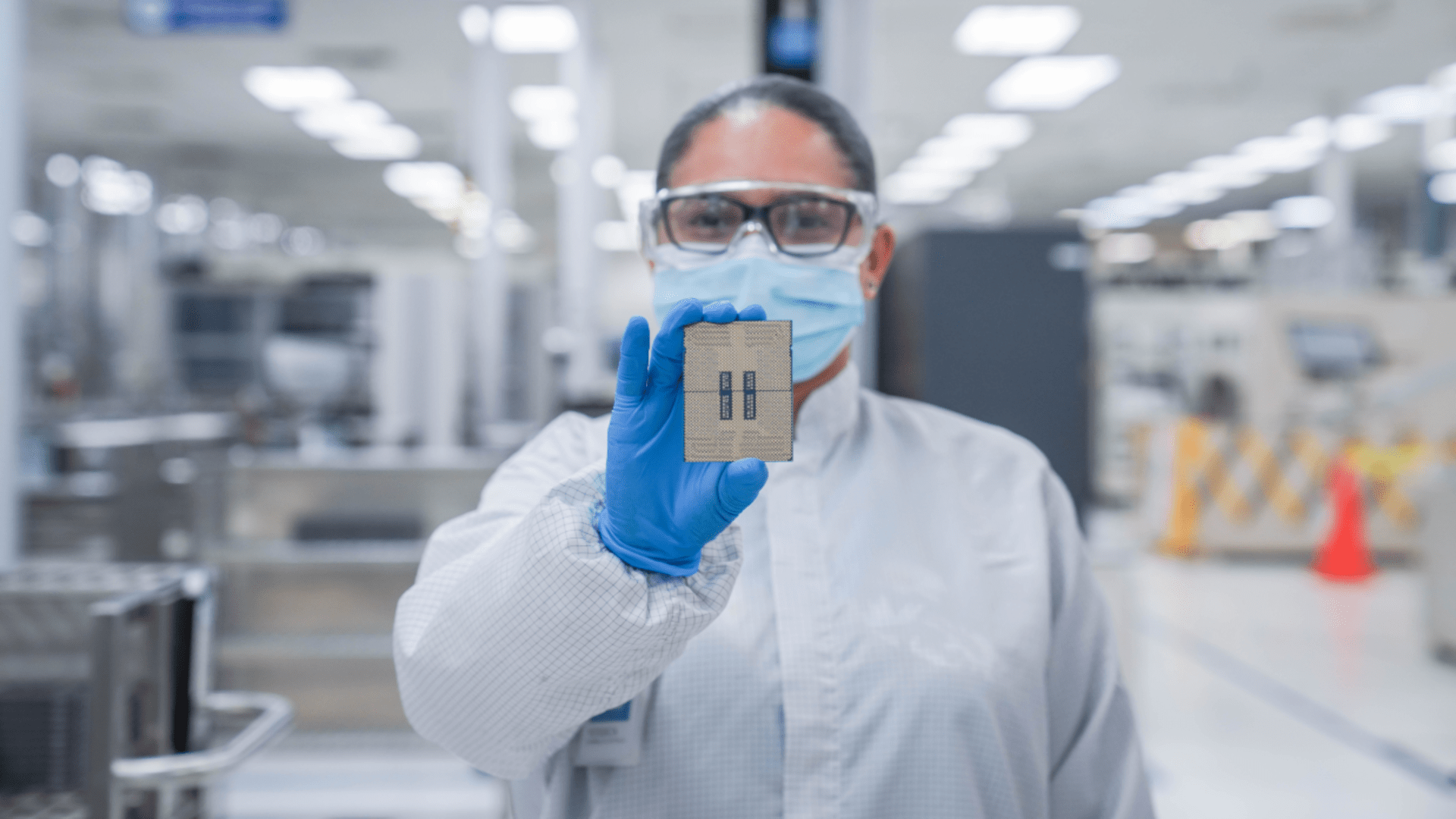

- Intel and Google co-develop custom IPUs for networking and storage

Intel and Google announced a multi-year collaboration that will keep Intel Xeon processors at the heart of Google Cloud infrastructure for the foreseeable future.

The deal covers multiple generations of Xeon chips and includes systems used for AI workloads, inference tasks and general purpose computing in Google’s global data centers.

Google Cloud instances like C4 and N4 already rely on Xeon 6 processors, and this deal ensures that trend continues.

Article continues below

Why CPUs Still Matter in the Age of Specialized AI Hardware

“AI is reshaping the way infrastructure is built and scaled,” said Lip-Bu Tan, CEO of Intel.

“Scaling AI requires more than accelerators: it requires balanced systems. CPUs and IPUs are essential to providing the performance, efficiency and flexibility that modern AI workloads demand.”

This announcement comes at a time when many hyperscalers are accelerating the adoption of custom Arm-based processors for AI tasks.

Counterpoint Research recently claimed that 90% of AI servers running custom silicon will rely on the Arm instruction set architecture, leaving x86 with only a small share of new deployments.

To ensure Xeon remains relevant, Intel and Google are also jointly developing custom infrastructure processing units designed to handle networking, storage and security workloads.

These IPUs function as ASIC-based accelerators that move infrastructure tasks away from host processors, allowing Xeon processors to focus on running applications.

This separation improves system efficiency and resource allocation in large cloud deployments running AI tools, AI agents, and large language models.

Processors and infrastructure acceleration remain the cornerstone of AI systems, from training orchestration to inference and deployment,” said Amin Vahdat, senior vice president and chief technologist for AI infrastructure at Google.

Google currently uses Xeon 5 and Xeon 6 processors across multiple service layers, as well as its own custom Arm-based Axion processors.

These deployments continue alongside Google’s custom processors used in other parts of its infrastructure stack.

Intel and Google say the collaboration between processors and IPUs will continue on future generations of systems, covering ongoing integration efforts between layers of cloud infrastructure.

They argue that infrastructure processors and accelerators are still part of current cloud design patterns in distributed systems.

Many workloads running in Google’s data centers require backward compatibility with x86 architecture, while others require maximum single-threaded performance offered by Xeon processors.

These requirements are expected to persist for years, which is why Intel and Google signed this multi-year agreement.

Follow TechRadar on Google News And add us as your favorite source to get our news, reviews and expert opinions in your feeds. Make sure to click the Follow button!

And of course you can too follow TechRadar on TikTok for news, reviews, unboxings in video form and receive regular updates from us on WhatsApp Also.